…the contradictory opposite of a copulative proposition is a disjunctive proposition composed of the contradictory opposites of its parts… the contradictory opposite of a disjunctive proposition is a copulative proposition composed of the contradictories of the parts of the disjunctive proposition.― William of Ockham (1355), or:

― Augustus De Morgan (1860)$$ \sim\!(P \land Q) \to (\sim\!P \,\,\lor \sim\!Q)\\ \sim\!(P \lor Q) \to (\sim\!P \,\,\land \sim\!Q) $$

Any impatient student of mathematics or science or engineering who is irked by having algebraic symbolism thrust upon him should try to get along without it for a week.― Eric Temple Bell

Mathematical notation is not finished. You can tell, because so much of it is new, and because so many smart people struggle with it as it is.

Still, a set of conventions have hardened in the last 100 years. Maths is as terse as possible; monochrome; unfriendly; operates at full generality; and gives bad, undescriptive names to its objects.

Now, aside from the distress it causes the beginner, terseness is good: it lets us fits more in our head at once, and so go faster, and so go further. The move from prose to symbols is objectively an improvement, even as the appearance of maths moved further from human intuition.

What else is good about the conventional style? It is minimalist; it does not patronise; it is tasteful and grown-up; its generality saves a lot of ink; its leaving almost everything unsaid saves a lot of time. To master a conventional serious proof is to overcome an adversary, to simultaneously prove something about oneself.

Here are some different ways of doing it, less optimised for past masters.

Colour

Use colours to instantly relate symbols to explanations, whether verbal or graphical. Like Eric Jang’s incredible ‘Dijkstras in Disguise’:

This is also an instance of giving people several angles of attack on the same concept.

(There’s mixed evidence about coloured text and comprehension in general, but the studies all focus on ordinary prose and I doubt they transfer to understanding formulae with dozens of symbols.)

Comments

For example, you may come across definitions like this: “A finite state automaton is a quintuple (\(Q\), \(\Sigma\) , \(q_0\), \(F\), \(\delta\)) where Q is a finite set of states (\(q_0\), \(q_1\), …, \(q_n\) ), \(\Sigma\) is a finite alphabet of input symbols, \(q_0\) is the start state, \(F\) is the set of final states \(F \in Q\), and \(\delta \in Q \times \Sigma \times Q\), the transition function.”

That definition should be taken outside and shot.

rigour follows insight, and not vice versa.~ James Stone

Michael Sipser has good comments on all the proofs in his great CS book:

Diagonalisation

Evan Chen’s book for bright highschoolers is suitably friendly too.

For learning material (rather than research writeups), the steps of a proof could be tagged as “routine”, “creative”, “tricky”, or “key” (h/t Qiaochu). These would be best as sidenotes.

Further: Why is there no metadata? The field dependencies; the theorem dependencies, upfront; how important this result is, for what; some proofs with a similar flavour; or, for fun, what’s the newest result necessary for this proof? When could it first have been proved?

An important subset of commentary is historical notes which explain why something apparently arbitrary is not, as with Carl Meyer’s “Matrix Analysis”.

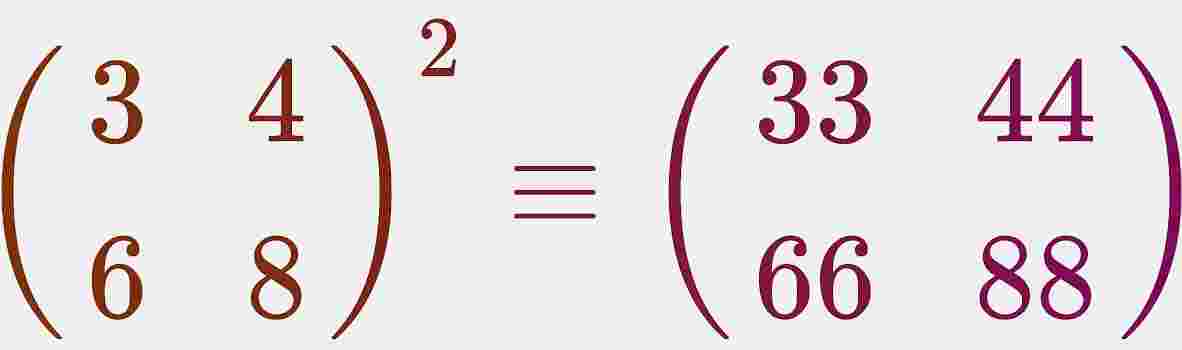

Motivating examples

A good stock of examples, as large as possible, is indispensable for a thorough understanding of any concept, and when I want to learn something new, I make it my first job to build one.

Most maths writing jumps straight to the general definitions. But at least some people need to work up from examples and counterexamples instead.

This is another place that Chen’s basic book beats high-status university texts:

Literal examples are just one answer to the question “Why should I care about this theory?”. Maybe authors think that question is wishy-washy, but examples are not subjective, just partial. I’m not even asking for – horror of horrors! – applications. Maybe generality feels strong: to solve all examples at once, without looking at them, is to rise above the objects.

There is an ignorant way of asking “Why should I care?”: the way with no sense of aesthetics, curiosity, patience, the philistine way that cannot see any value without an application behind it, or money. This is maybe the way mathematicians take the question, and so maybe why they shun it.

Composing subproofs

Here’s proof by induction as an algorithm:

You then see that for any given instance you just need to write the two subroutines BaseCase and InductiveStep. I find this much easier to understand.

More generally I don’t see much dependency inversion in proofs. Long proofs will include a sketch of the strategy, but mostly not with this lucidity. (Exceptions: Sipser, Chen.)

Maybe this only works if you know some programming before you do higher maths (a lamentably rare condition).

Here’s an unfair but illuminating rant:

Imagine I asked you to learn a programming language where:

- All the variable names were a single letter, and where programmers enjoyed using foreign alphabets, glyph variation and fonts to disambiguate their code from meaningless gibberish.

- None of the functions were documented, and instead the API docs consisted of circular references to other pieces of similar code, often with the same names overloaded into multiple meanings, often impossible to Google.

- None of the sample code could be run on a typical computer; in fact, most of it was pseudo-code lacking a definition of input and output, or even the environment it was supposed to run.

Graph dependencies

Is maths a directed graph of theorem to theorem? Close enough! But even chapter-level can be helpful:

Tweaks

- Physicists have a nicer way of marking the variable of integration. Instead of putting \(\text{d}x\) at the end, they put it at the start. This saves on brackets and rereading.

Visuals

It seems insane that the study of change is mostly taught without any, y’know, animations.

The limit case of visual mathematics are the lovely proofs without words.

We don’t need to endorse any pseudoscience about “learning styles” to think that there are areas of mathematics for which even symbols are not the most efficient delivery.

Caveats

I’m not claiming that the above are the most important problems with maths teaching. Focussing on mechanical manipulation over insight, and on reproduction rather than creativity, seem like more dire mistakes.

All of academic science is stuck on many of the above, stuck in the 90s. Maybe worst is the stagnation of the conventional paper: static in visuals; never revised unless gross misconduct can be proven; completely decoupled from its justifying evidence and code. Was the last big innovation the hyperlink, 1995? Here are two examples of great post-papers, and a manifesto. (My field, machine learning is unusually tolerant of blog posts, but is still a long way from giving them equal respect, even when it’s warranted.)

mathematics is, to a large extent, the invention of better notations

- Feynman

See also

- George on leaky abstractions and what will replace mathematics

- Jeremy Kun on some real shit

- Terry Tao on the mathematics of mathematical notation.

- Terry Tao on good notation

- Quantum Country

- https://www.bhauth.com/blog/thinking/terminology%20as%20convolution.html

- Communicating with Interactive Articles

Credit to John Lapinskas for the induction algorithm.