Insurance isn't necessarily a scam |

|

Two of my friends believe insurance is a scam, for two different reasons. One is an argument from insurer profit, the other an argument from inequality. They’re sometimes right - and when they’re wrong it’s because of interesting facts about human nature.

I limit the following to for-profit, personal, noncompulsory insurance (as opposed to mutuals, insurance taken out by organisations 0, or that used to compensate people we harm, or that compelled by law). I also assume you’re honest with the insurer (don’t have risk-relevant info they don’t).

Some jargon

- premium: money paid to the insurer for cover.

- underwriting profit

= premiums - claim amounts - operating costs - taxes. - investment profit: return to the insurer from using premiums as free capital.

- expected value: the average payout, taking into account how likely it is.

EV = probability of win x payout if you win - utility function: an abstraction about what we like; the shape of our tastes; a mapping from

event -> subjective goodness of event - self-insurance: paying for losses out of your own savings.

- general insurance: anything but life insurance.

- property-casualty insurance: cover for personal property (cars, homes) and liabilities.

1. Argument from profit

1. Insurance firms make profit: their revenue > their costs.

2. Their costs are *at least* the true expected loss plus operating costs.

3. Therefore average premiums are higher than the expected loss.

4. Therefore the average honest policyholder is making an expected loss.

This becomes normative if we accuse the consumer of inconsistent preferences:

5. People want more money.

6. People buy insurance, which is on average a loss of money, by (4).

7. Therefore people are inconsistent and should stop (5) or (6).

This has two things wrong with it: one nonfatal, and one fatal but slightly arcane.

Insert ultimately irrelevant clarification

Most insurer profit is "investment gains" - the money they make off investing the money you give them - and not the "underwriting profit", the direct flow from policyholders. (Most years have no property-casualty underwriting profit: they pay out more in claims than they get in premium. 24 )

But these capital gains are being diverted from the policyholder - so the same argument applies one level up.

(You might say that someone holding risk should be compensated for doing so - but insurers both set prices and have absolute right of refusal, so nah.)

Another fact which doesn't really change matters is targeted discounts: for various reasons (geographical diversification, or boosting the portfolio size, etc) an insurer might decide to offer people policies below the "risk price". But this isn't generally true, and is never a majority of premiums.

The real objection is that humans are more complicated than that. In particular, premise (5) obscures a fundamental fact about us: our utility is nonlinear, losses can hurt more than equal-sized gains delight, and uncertainty about losses is itself unpleasant.

Diminishing marginal utility

The world is beautiful because it varies. 1- Proverb

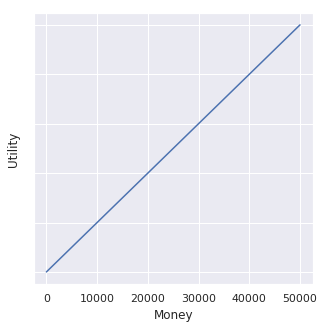

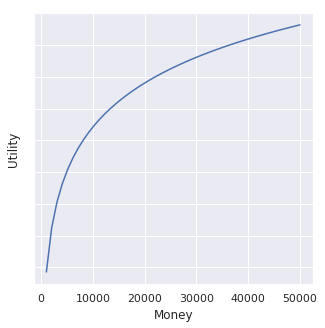

In short, we value increasing amounts of any particular thing less and less per unit. (Think how unusual it is for you to pay to see the same film in the cinema twice in succession, or any four films on the same day. Or consider the case of biscuits.) If valuing money looked like this:

6 - i.e. if getting £1000 when you’re rich was as welcome as it is when you’re poor, and if you valued bankruptcy as only a little worse than extreme poverty, then insurance wouldn’t make sense. However, people are instead something like this:

with a steep drop as you approach zero. As a result, it can be rational to purchase insurance, for things you can’t afford to replace. 7

Some people think this is foolish, because people “shouldn’t” have log utility in money: more is objectively better.3 But this is illegitimate, because economic rationality is defined relative to a given utility function (and, more practically, because one mostly doesn’t get to choose what one values). 8

This is a classic modelling mistake: to maximise x rather than U(x), to conflate the event with exposure to the event, to treat financial gain as identical with psychological gain.

Insurance is gambling, and we are good at gambling

When reaching 'zero' (bankruptcy or death) is much worse than similar-sized losses above zero, you don't use expected value, but instead the conservative Kelly criterion. Given a few assumptions, this tells you how much you should pay for bets / insurance policies, given your current wealth:

Say your house is worth V = £100,000 and that you have other assets worth W = £120,000. Say also that you know the annual probability of a house of your vintage in your area burning down, p = 1/10000 or something. Then you buy insurance if the cost of it, C, beats $$ \log(W - C) > p \times \log(W - V) + (1 - p) \times \log(W) $$ i.e. £22 is the most you'd pay per year, by one rational measure.

(Don't take this too literally. It is tricky to use the criterion properly: this post explains all the dubious assumptions involved, including that (in this case) it values losing your house as infinitely bad. But it's a good way of bounding things.)

That’s enough to kill the argument, but actually there’s more:

Risk aversion: uncertainty hurts

Some people are willing to lose a bit to “buy peace of mind”, i.e. they prefer a fixed cost x to a random cost with the same expected value, E(θ) = x. That’s weak risk aversion, and people often act under a stronger version, paying a bit more to take a fixed cost, reducing their uncertainty.

(This is the origin of “premium”: the risk premium is the extra someone is willing to pay to mitigate a risk, over the expected value. The natural usage of “premium” is something else though…)

(NB: In utility theory this is the same phenomenon as DMU, in the sense of being implied by the same shape of utility function. But it’s psychologically distinct from the love of novelty in consumption, and I’m trying to stay close to psychological facts for these reasons.)

Loss aversion: bad is worse than good is good

People often prefer to avoid losing £x more than they prefer to gain £x. This maybe explains why they do things like take out loans and insurance, and hold on to possessions despite not using them, even when these have a (small) negative expected value. This is to “smooth” their budgets and prevent the dismay of cuts.

(It doesn’t matter to us whether this is a distinct feature of human value, or merely a case of psychological inertia, where you want things to continue as they are.)

How literally can we take this ‘function’ stuff?

Strictly speaking, the above is at best a useful fiction, because we don’t have “a” utility function: humans aren’t consistent enough to be described by a single payoff curve, or any specifiable set of curves - what we value depends on what mood we’re in, which depends on a host of chemical and accidental factors; and we often don’t know what we like (or more: don’t even have a preference) until we are made to choose things. So we violate the conditions of utility theory. Utility theory is a nice neat mathematical object.10 Humans are mostly buzzing blooming confusions. 9

Functions are an analogy for the (fairly solid) psychological regularities involved, not to make big claims about human simplicity. One useful part of the ‘function’ analogy is normative: utility theory reminds us that preferences should be commensurate, if you don’t want to lose systematically.

2. Argument from regressive burden

So, insurance only makes sense for things you can’t afford to replace (unless you have asymmetric info, unusual preferences, subsidy, regulation…). But this means that the poorer you are, the more insurance you can rationally use! Insurance can be seen as the rich selling the poor a bit of resilience.

If everyone had a chunk of savings ($10k?), rationally speaking there would be no petty insurance - for things like household contents, warranties, flights, luggage. And this would reduce deadweight (wasted economic activity).

“There could be a better world than our present one,” this says. This is true and good to remember, but not helpful: a world in which the poor didn’t insure themselves against things they can’t afford to replace is worse than our present one.

Misc notes

Insurance and self-insurance

Insurance as inertia or deletion of nonergodic nodes

the anomalies loss aversion was introduced to explain — the risky bet premium, the endowment effect, and the status-quo bias — are characterized not only by a loss/gain tradeoff, but by a tradeoff between the status-quo and change; and, that a propensity towards the status-quo in the latter tradeoff is sufficient to explain these phenomena. Moreover, I show that two basic psychological principles — (1) that motives drive behavior; and (2) that preferences tend to be fuzzy and ill-defined — imply the existence of a robust and fundamental propensity of this sort.Another strand denies that such bets are negative value at all: if you consider not the single-decision expected payoff but the 'time-average growth rate of wealth', then supposedly insurance averages out positive over a population over (a long) time.

I'm not sure about this, and the debate has an unproductive amount of heat (Gal accuses the field of bias to explain loss aversion's long life), partly owing to Taleb's twitter brigade getting involved. (A fine display of his personal "IYIs".) But the evidence for loss aversion is certainly weaker than I expected.

Solve for the equilibrium

Ben Orlin on the grim future of perfect knowledge

When we looked at the coins, we reduced our uncertainty, and without uncertainty, insurance collapses. If we know in advance who will suffer — whose boat will sink, whose employees will win the lottery, who will suffer that NFL-dream-crushing injury — then insurance becomes impossible. You can only pool risk among people who share it. In our medical system, this problem is unfolding year by year. Genetic testing and improved statistics threaten the basic logic of insurance.Here.

I see no easy solution. If you individualize the rates, then some folks pay pennies while others face premiums almost as steep as the medical bills themselves. But charging everyone the same amount turns a project of mutual benefit, with each of us hedging against risk, into a project of collective charity, with some folks subsidizing others. That’s a harder sell. It’s one reason why American healthcare remains so contentious.

As a teacher, I’m inclined to think of all knowledge as a gift. But insurance complicates that story. Our ignorance of fate can force us to collaborate against it. We have built a democracy from our uncertainty—and new knowledge threatens that balance, as sure as flood or fire. </blockquote>

-

Most large endeavours couldn't exist without heavy insurance - the financial risk would scare everyone off otherwise. And being able to compensate people we harm, without ruining ourselves forever, is a clear win.

-

Il mondo è bello perché è vario.

-

US market:

Not a single underwriting profit was recorded in the 25 years from 1979 through 2003. And even though that streak ended in 2004, underwriting profits are anything but the norm they were in the 1930s, 1940s, and 1950s. In the eight years from 2004 through 2011, only three tallied underwriting profits.

- For a tiny number of people - those who give a lot to charity - this is justified, because they transfer money to help those at the sharper end of their own utility functions.

-

and the similar UK market:

EY expects the industry to post a combined ratio of almost 98 per cent this year. The industry has only posted a ratio below 100 per cent — suggesting an underwriting profit — in five of the past 33 years.

- Actuaries aren't perfect modellers by a long shot, but on average they do ok, enough to unlock the net investment profit anyway. So premise (3) is true.

- One reason Wodehouse novels are so enchanting is that they don't get boring despite being really formulaic. I've read 20 and plan to read the other 75.

-

Strong claims about the relationship between money and wellbeing are often made, though they currently contradict each other. One large study (n=1m) found "income satiation" (no strong evidence for increased happiness) at about $95k.

But we don't need any parameter in this post. (It just amazes me that we are only just beginning to study this incredibly important subject, 200 years into the age of mature science, 100 into the age of premature social science.) -

There could be people whose utility is linear in money - made happiest by simply following naive game theory and being risk-insensitive. But my friend is not one of them (you can tell because he isn't a

crack-smugglingquantwho hacks crypto exchangesin his spare time), so the argument as stated, as a general norm, fails. - You get further if you think of a person as a bundle of partially conflicting agents. (For a flawed prototype, consider Freud's plagiarism of Plato.)

- Not that it's a complete solution to even rational preferences.

Comments

Tags: utility, rationality, finance