Now a crowdsourced project elsewhere. Seeking volunteers!

A medical reversal is when an existing treatment is found to actually be useless or harmful. Psychology has in recent years been racking up reversals: in fact only 40-65% of its classic social results were replicated, in the weakest sense of finding ‘significant’ results in the same direction. (Even in those that replicated, the average effect found was half the originally reported effect.) Such errors are far less costly to society than medical errors, but it’s still pollution, so here’s the cleanup. 1

Psychology is not alone: medicine, cancer biology, and economics all have many irreplicable results. It’d be wrong to write off psychology: we know about most of the problems here because of psychologists, and its subfields differ a lot by replication rate and effect-size shrinkage.

One reason psychology reversals are so prominent is that it’s an unusually ‘open’ field in terms of code and data sharing. A less scientific field would never have caught its own bullshit.

The following are empirical findings about empirical findings; they’re all open to re-reversal. Also it’s not that “we know these claims are false”: failed replications (or proofs of fraud) usually just challenge the evidence for a hypothesis, rather than affirm the opposite hypothesis. I’ve tried to ban myself from saying “successful” or “failed” replication, and to report the best-guess effect size rather than play the bad old Yes/No science game. 2

Figures correct as of March 2020; I will put some effort into keeping this current, but not that much.

Code for converting means to Cohen’s d and Hedge’s g here.

Social psychology

No good evidence for many forms of priming, automatic behaviour change from ‘related’ (often only metaphorically related) stimuli. 3

- Questionable evidence for elderly priming, that hearing about old age makes people walk slower. The p-curve alone argues against the first 20 years of studies.

Stats

- Original paper: 'Automaticity of social behavior', Bargh 1996; 2 experiments with n=30. (~5200 citations)

- Critiques: Doyen 2012 (n=120), Pashler 2011 (n=66). Meta-analysis: Lakens 2017.

Total citations: ~44 - Original effect size: d=0.82 to d=1.08.

- Replication effect size: Doyen: d= minus 0.07. Pashler: d= minus 0.22

- No good evidence for professor priming, improved (“+13%”) performance at trivia after picturing yourself as a professor vs as a thug.

Stats

- Original paper: 'The relation between perception and behavior, or how to win a game of Trivial Pursuit', Dijksterhuis and van Knippenberg (1998), n=60.

(~1000 citations). - Critiques: O'Donnell et al, 23 direct replications, cumulative n=4,493. Lakens 2017 again. Citations: ~40.

- Original effect size: 13%

- Replication effect size: 0.14% [-0.71, 1.00]

- No good evidence for the Macbeth effect, that moral aspersions induce literal physical hygiene.

Stats

- Original paper: 'Washing away your sins: threatened morality and physical cleansing', Zhong & Liljenquist 2006.

(~1190 citations). - Critiques: Siev 2018, meta-analysis of 15 studies, cumulative n=1,746.

Citations: ~6 - Original effect size: g = 0.86 [0.05, 1.68] for Study 3.

- Replication effect size: g = 0.07 [-0.04, 0.19] among the independent labs

- No good evidence for money priming, that “images or phrases related to money cause increased faith in capitalism, and the belief that victims deserve their fate”, etc.

Stats

- Original paper: 'Mere exposure to money increases endorsement of free-market systems and social inequality', Caruso 2013. n between 30 and 168

(~120 citations). - Critiques: Rohrer 2015, n=136. Lodder 2019, a meta-analysis of 246 experiments.

(total citations: ~70) - Original effect size: system justification d=0.8, just world d=0.44, dominance d=0.51

- Replication effect size: For 47 preregistered experiments in Lodder:

g = 0.01 [-0.03, 0.05] for system justification,

g = 0.11 [-0.08, 0.3] for belief in a just world,

g = 0.07 [-0.02, 0.15] for fair market ideology.

- No good evidence of anything from the Stanford prison ‘experiment’. It was not an experiment; ‘demand characteristics’ and scripting of the abuse; constant experimenter intervention; faked reactions from participants; as Zimbardo concedes, they began with a complete “absence of specific hypotheses”.

Stats

- Original paper: 'Interpersonal dynamics in a simulated prison', Zimbardo 1973

(1800 citations, but cited by books with hundreds of thousands of citations). - Critiques: convincing method & data inspection - Le Texier 2019

(total citations: ~8) - Original effect size: Key claims were insinuation plus a battery of difference in means tests at up to 20% significance(!). n="21" (typo for 24?).

- Replication effect size: N/A

- No good evidence from the original Milgram experiments that 65% of people will inflict pain if ordered to. Experiment was riddled with researcher degrees of freedom, going off-script, implausible agreement between very different treatments, and “only half of the people who undertook the experiment fully believed it was real and of those, 66% disobeyed the experimenter.”

Stats

- Original paper: Behavioral Study of obedience, Milgram 1963. n=40

(~6600 citations). (The full range of conditions was n=740.) - Critiques: Burger 2011, Perry 2012, Brannigan 2013; Griggs 2016

(total citations: ~240). - Original effect size: 65% of subjects said to administer maximum, dangerous voltage.

- Replication effect size:

Doliński 2017 is relatively careful, n=80, and found comparable effects to Milgram. Burger (n=70) also finds similar levels of compliance to Milgram, but the level didn't scale with the strength of the experimenter prods (see Table 5: the only real order among the prompts led to universal disobedience), so whatever was going on, it's not obedience.

One selection of follow-up studies found average compliance of 63%, but suffer from the usual publication bias and tiny samples. (Selection was by a student of Milgram.) The most you can say is that there's weak evidence for compliance, rather than obedience. ("Milgram's interpretation of his findings has been largely rejected.")

- No evidence from the original Festinger analysis that a particular cult responded to a falsified prediction of alien rapture by redoubling their faith. In fact, as you’d expect, they disbanded. The general theory of cognitive dissonance has other resources.

Stats

- No good evidence that tribalism arises spontaneously following arbitrary groupings and scarcity, within weeks, and leads to inter-group violence . The “spontaneous” conflict among children at Robbers Cave was orchestrated by experimenters; tiny sample (maybe 70?); an exploratory study taken as inferential; no control group; there were really three experimental groups - that is, the experimenters had full power to set expectations and endorse deviance; results from their two other studies, with negative results, were not reported.

Stats

- Original paper: 'Superordinate Goals in the Reduction of Intergroup Conflict', Sherif 1958, n=22; (His books on the studies are more cited: 'Groups in harmony and tension' (1958) and Intergroup Conflict and Co-operation'.)

(~7000 total citations including the SciAm puff piece). - Critiques: Billig 1976 in passing (729 citations), Perry 2018 (citations: 9)

- Original effect size: Not that kind of psychology. ("results obtained through observational methods were cross-checked with results obtained through sociometric technique, stereotype ratings of in-groups and outgroups, and through data obtained by techniques adapted from the laboratory. Unfortunately, these procedures cannot be elaborated here.")

- Replication effect size: N/A

(Set aside the ethics: the total absence of consent - the boys and parents had no idea they were in an experiment - or the plan to set the forest on fire and leave the boys to it.)

Tavris claims that the underlying "realistic conflict theory" is otherwise confirmed. Who knows.

- Lots of screen-time is not strongly associated with low wellbeing; it explains about as much of teen sadness as eating potatoes, 0.35%.

Stats

- Original paper: Media speculation? (millions of 'citations').

- Critiques: Orben 2019, n=355,358

- Original effect size: N/A

- Replication effect size: median association of technology use with adolescent well-being was β=−0.035, s.e.=0.004

- The usual suspects responded with a spec curve analysis (great!) and go fishing for a subgroup where the effect is notable. They find one (social media time in girls rather than screentime in the young), but only after misusing Rohrer 2018 to justify dropping important confounders as potential mediators.

- No good evidence that female-named hurricanes are more deadly than male-named ones. Original effect size was a 176% increase in deaths, driven entirely by four outliers; reanalysis using a greatly expanded historical dataset found a nonsignificant decrease in deaths from female named storms.

Stats

- Original paper: 'Female hurricanes are deadlier than male hurricanes', Jung 2014, n=92 hurricanes discarding two important outliers.

(~76 citations). - Critiques: Christensen 2014. Smith 2016, n=420 large storms.

(total citations: ~15) - Original effect size: d=0.65: 176% increase in deaths from flipping names from relatively masculine to relatively feminine

- Replication effect size: Smith: 264% decrease in deaths (Atlantic); 103% decrease (Pacific).

- At most weak use in implicit bias testing for racism. Implicit bias scores poorly predict actual bias, r = 0.15. The operationalisations used to measure that predictive power are often unrelated to actual discrimination (e.g. ambiguous brain activations). Test-retest reliability of 0.44 for race, which is usually classed as “unacceptable”. This isn’t news; the original study also found very low test-criterion correlations.

Stats

- Original paper: 'Measuring individual differences in implicit cognition: The implicit association test', Greenwald 1998, n=28 for Experiment 3

(12,322 citations). - Critiques: Oswald 2013, meta-analysis of 308 experiments. Carlsson 2015.

(total citations: ~650) - Original effect size: attitude d=0.58; r=0.12.

- Replication effect size: Oswald: stereotype IAT r=0.03 [-0.08, 0.14],

attitude IAT r=0.16 [0.11, 0.21]

- The Pygmalion effect, that a teacher’s expectations about a student affects their performance, is at most small, temporary, and inconsistent, r<0.1 with a reset after weeks. Rosenthal’s original claims about massive IQ gains, persisting for years, are straightforwardly false (“The largest gain… 24.8 IQ points in excess of the gain shown by the controls.”), and used an invalid test battery. Jussim: “90%–95% of the time, students are unaffected by teacher expectations”.

Stats

- Original paper: 'Teachers' expectancies: Determinants of pupils' IQ gains', Rosenthal 1966, n around 320.

(700 citations, but the popularisation has 10,500). - Critiques: Raudenbush 1984, Thorndike 1986, Spitz 1999, Jussim 2005

(total citations: ~2100) - Original effect size: Average +3.8 IQ, d=0.25.

- Replication effect size: Raudenbush: d=0.11 for students new to the teacher, tailing to d=0 otherwise. Snow: median effect d=0.035.

- At most weak evidence for stereotype threat suppressing girls’ maths scores. i.e. the interaction between gender and stereotyping.

Stats

- Original paper: 'Stereotype Threat and Women’s Math Performance', Spencer 1999, n=30 women

(~3900 citations). - Critiques: Stoet & Geary 2012, meta-analysis of 23 studies. Ganley 2013, n=931. Flore 2015, meta-analysis of 47 measurements. Flore 2018, n=2064. Inglis & O'Hagan 2022, n=250,000

(total citations: ~500) - Original effect size: Not reported properly; Fig.2 looks like control-group-women-mean-score = 17 with sd=20, and experiment-group-women-score = 5 with sd=15. Which might mean roughly d= −0.7.

- Replication effect size:

Stoet: d= −0.17 [−0.27, −0.07] for unadjusted scores.

Ganley: various groups, d= minus 0.27 to 0.17.

Flore 2015: g= −0.07 [−0.21; 0.06] after accounting for publication bias.

Flore 2018: d= −0.05 [−0.18, 0.07]

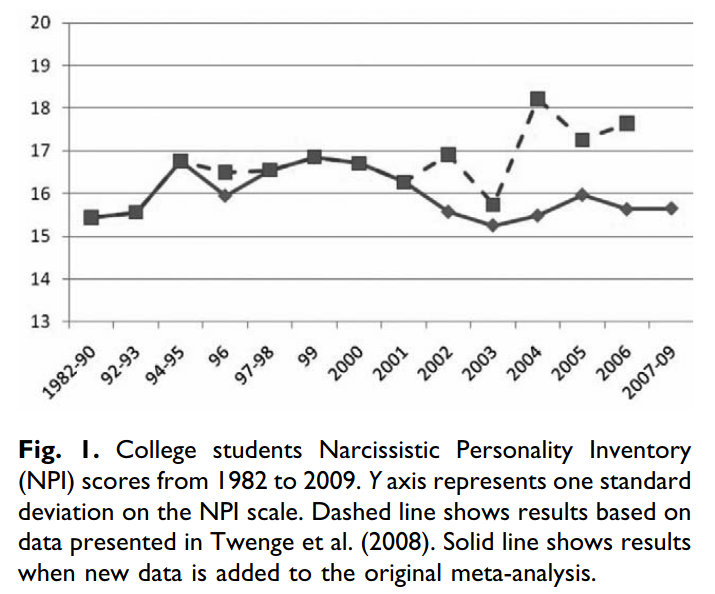

- Questionable evidence for an increase in “narcissism” (leadership, vanity, entitlement) in young people over the last thirty years. The basic counterargument is that they’re misidentifying an age effect as a cohort effect (The narcissism construct apparently decreases by about a standard deviation between adolescence and retirement.) “every generation is Generation Me”

All such “generational” analyses are at best needlessly noisy approximations of social change, since generations are not discrete natural kinds, and since people at the supposed boundaries are indistinguishable.

Stats

- Original paper: Twenge 2006, 'Generation Me', but it's an ancient hypothesis. Various studies, including national surveys.

(~2600 citations) - Critiques: Five studies from Donnellan and Trzesniewski, n=477,380. Arnett 2013, Roberts 2017, Wetzel 2017

(~660 total citations) - Original effect size: d=0.37 increase in NPI scores (1980-2010), n=49,000.

- Replication effect size: Roberts doesn't give a d but it's near 0. something like d=0.03 ((15.65 - 15.44) / 6.59 )

Table 3 here shows a mix of effects in 30 related constructs between 1977 and 2006, up and down.

Wetzel: d = minus 0.27 (1990 - 2010)

- Be very suspicious of anything by Diederik Stapel. 58 retractions here.

Positive psychology

- No good evidence that taking a “power pose” lowers cortisol, raises testosterone, risk tolerance.

That a person can, by assuming two simple 1-min poses, embody power and instantly become more powerful has real-world, actionable implications.

After the initial backlash, it focussed on subjective effect, a claim about “increased feelings of power”. Even then: weak evidence for decreased “feelings of power” from contractive posture only. My reanalysis is here.

Stats

- Original paper: 'Power Posing : Brief Nonverbal Displays Affect Neuroendocrine Levels and Risk Tolerance', Cuddy, Carney & Yap 2010, n=42 mixed sexes.

Many, many errors. Disowned by one of its authors. Thanks to a reanalysis by someone else, we actually have the data.

(~1100 citations; 56m views on TED). - Critiques: Ranehill 2015, n=200 (not an exact replication);

Garrison 2016, n=305;

Simmons and Simonsohn 2016, p-curve check of 33 studies;

Ronay 2017, n=108;

Metzler 2019, n=82 men.

Crede 2017, Crede 2018: multiverse analysis shows that the original result is heavily dependent on posthoc analysis choices.

(total citations: ~400) - Original effect sizes:

h = 0.61 in risk-taking,

d = minus 0.30 for cortisol,

d=0.35 for testosterone

d=0.79 for feelings of power - Replication effect size:

risk-taking d = [−0.176],

testosterone d = [−0.2, −0.19, 0.121],

cortisol d = [−0.157, 0.22, 0.028, 0.034]

most CIs overlapping 0

- Weak evidence for facial-feedback (that smiling causes good mood and pouting bad mood).

Stats

- Original paper: 'Inhibiting and Facilitating Conditions of the Human Smile: A Nonobtrusive Test of the Facial Feedback Hypothesis' by Strack, Martin, Stepper 1988. n=92 twice.

(~2200 citations). - Critiques: 17 replications, Wagenmakers et al 2016,

(total citations: ~220), Schimmack 2017 - Original effect size: d = 0.43 (0.82 out of 9)

- Replication effect size: 0.03 out of 9, CI overlapping 0.

A meta-analysis of 98 studies finds d= 0.2 [0.14, 0.26] with an absurdly low p value, and doesn't find publication bias. But this latter point simply can't be right. Given d = 0.2 and the convention of targeting 80% power to detect a real phenomenon, you would need very high sample sizes, n > 500. And almost all of the included studies are N < 100. Schimmack finds strong evidence of publication bias on a subset of these papers, using a proper power analysis.

98 pieces of very weak evidence cannot sum to strong evidence, whatever the p-value says. (The author agrees.)

- Reason to be cautious about mindfulness for mental health. Most studies are low quality and use inconsistent designs, there’s higher heterogeneity than other mental health treatments, and there’s strong reason to suspect reporting bias. None of the 36 meta-analyses before 2016 mentioned publication bias. The hammer may fall.

Stats

- Critiques: Coronado-Montoya 2016

- Original effect size: prima facie, d=0.3 for anxiety or depression

- Replication effect size: Not yet.

- No good evidence for Blue Monday, that the third week in January is the peak of depression or low affect ‘as measured by a simple mathematical formula developed on behalf of Sky Travel’. You’d need a huge sample size, in the thousands, to detect the effect reliably and this has never been done.

Cognitive psychology

- Good evidence against ego depletion, that willpower is limited in a muscle-like fashion.

Stats

- Original paper: 'Ego Depletion: Is the Active Self a Limited Resource?', Baumeister 1998, n=67.

(~5700 citations). - Critiques: Hagger 2016, 23 independent conceptual replications. Classic post.

(total citations: ~640) - Original effect size: something like d = minus 1.96 between control and worst condition. (I hope I'm calculating that wrong.)

4 - Replication effect size: d = 0.04 [−0.07, 0.14]. (NB: not testing the construct the same way.)

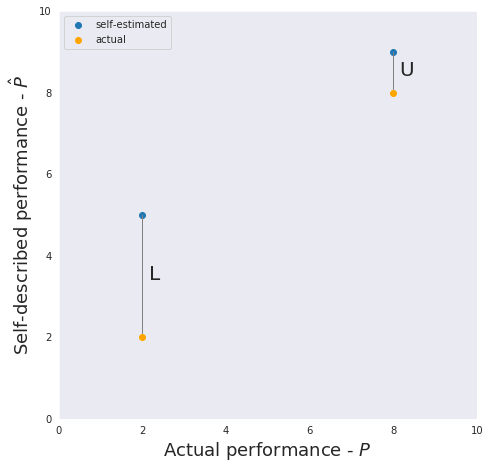

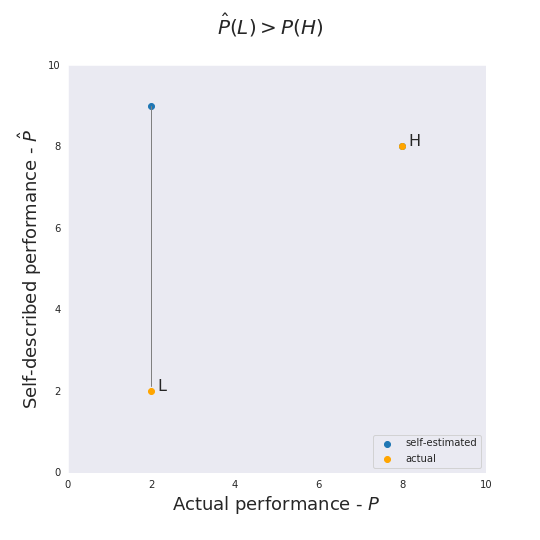

- Mixed evidence for the Dunning-Kruger effect. No evidence for the “Mount Stupid” misinterpretation.

Stats

First disambiguate the claim

1) the one the authors actually make: that poor performers (e.g. the bottom quartile) overestimate their performance more than good performers do: $$L > U$$

6

(Formally "the difference \( \hat{P} - P \) has a negative slope over actual performance moderator".)

2) that people in general overestimate their own ability \( \hat{P} > P \)

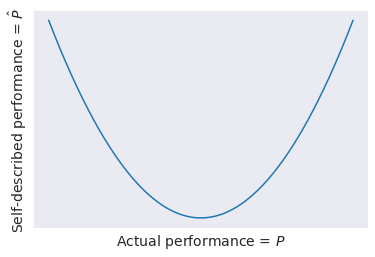

3) the meme: that there's a u-shaped relationship between perceived and actual ability. "The less competent someone is, the more competent they think they are".

4) Alternatively, that poor performers think they're better than good performers.

5) the authors' explanation: that (1) is caused by a lack of 'metacognitive' skills, being able to reflect on your ability at the task. That it's a cognitive bias suffered by the worst at a task. 5

- Original paper: 'Unskilled and unaware of it: how difficulties in recognizing one's own incompetence lead to inflated self-assessments.', Dunning & Kruger 1999, n=334 undergrads. This contains claims (1), (2), and (5) but no hint of (3) or (4).

(~5660 citations). - Critiques: Gignac 2020, n=929; Nuhfer 2016 and Nuhfer 2017, n=1154; Luu 2015; Greenberg 2018, n=534; Yarkoni 2010.

(total citations: ~20) - Original effect size: No sds reported so I don't know.

2 of the 4 experiments showed a positive relationship between score and perceived ability; 2 showed no strong relationship. And the best performers tended to underestimate their performance. This replicates: the correlation between your IQ and your assessment of it is around \(r \simeq 0.3\). (3) and (4) are not at all warranted.

(5) is much shakier than (1). The original paper concedes that there's a purely statistical explanation for (1): just that it is much easier to overestimate a low number which has a lower bound! And the converse: if I am a perfect performer, I am unable to overestimate myself. D&K just think there's something notable left when you subtract this.

It's also confounded by (2). Here's a great explainer. - Replication effect size (for claim 1): 3 of the 4 original studies can be explained by noisy tests, bounded scales, and artefacts in the plotting procedure. ("the primary drivers of errors in judging relative standing are general inaccuracy and overall biases tied to task difficulty".) Only about 5% of low-performance people were very overconfident (more than 30% off) in the Nuhfer data.

Gignac & Zajenkowski use IQ rather than task performance, and run two less-confounded tests, finding \(r = -0.05\) between P and errors, and \(r = 0.02\) for a quadratic relationship between \(P\) and \(\hat{P}\).

Jansen (2021) find independent support for claim 1 (n=3500) (the "performance-dependent estimation model") and also argue for (5), since they find less evidence for an alternative explanation, Bayesian reasoning towards a prior of "I am mediocre". (Fig 5b follows the original DK plot style, and is very unclear as a result.)

Muller (2020) replicate claim (1) and add some EEG stuff.

Some suggestion that claim (2) is WEIRD only.

Here's some helpful terminology.

- Questionable evidence for a tiny “depressive realism” effect, of increased predictive accuracy or decreased cognitive bias among the clinically depressed.

Stats

- Original paper: 'Judgment of contingency in depressed and nondepressed students: sadder but wiser?', 1979 (2450 citations).

- Critiques: Moore & Fresco 2012

(211 total citations) - Original effect size: d= minus 0.32 for bias about 'contingency', how much the outcome actually depends on what you do,

n=96 students, needlessly binarised into depressed and nondepressed based on Beck score > 9. (Why?) - Replication effect size: d = minus 0.07 with massive sd=0.46, n=7305, includes a trim-and-fill correction for publication bias. "Overall, however, both dysphoric/depressed individuals (d= .14) and nondysphoric/nondepressed individuals evidenced a substantial positive bias (d= .29)"

- Good evidence against choice overload / the tyranny of freedom, that having too many options leads to paralysis or dissatisfaction.

Stats

- Original paper: 'When Choice Is Demotivating: Can One Desire Too Much of a Good Thing?', Iyengar 2000, n=502.

(~5700 citations). - Critiques: Scheibehenne 2010, 50 independent conceptual replications with combined n=5000. Attempt to salvage it here.

(total citations: ~640) - Original effect size: ??

- Replication effect size: d = 0.02 [-0.09 to 0.12].

- Questionable evidence for the “hungry judge” effect, of massively reduced acquittals (d=2) just before lunch. Case order isn’t independent of acquittal probability (“unrepresented prisoners usually go last and are less likely to be granted parole”); favourable cases may take predictably longer and so are pushed until after recess; effect size is implausible on priors; explanation involved ego depletion.

Stats

- Original paper: 'Extraneous factors in judicial decisions', 2011 (1040 citations).

- Critiques: Weinshall-Margel 2011, Glöckner 2016, Lakens 2017

(77 total citations) - Original effect size: d=1.96, "the probability of a favorable ruling steadily declines from ≈0.65 to [0.05] and jumps back up to ≈0.65 after a break for a meal",

n=8 judges with n=1100 cases. - Replication effect size: N/A.

- No good evidence for multiple intelligences (in the sense of statistically independent components of cognition). Gardner, the inventor: “Nor, indeed, have I carried out experiments designed to test the theory… I readily admit that the theory is no longer current. Several fields of knowledge have advanced significantly since the early 1980s.”

Stats

- Original paper: Frames of Mind: The Theory of Multiple Intelligences, Gardner 1983

(37,229 citations).

- At most weak evidence for brain training (that is, “far transfer” from daily training games to fluid intelligence) in general, in particular from the Dual n-Back game.

Stats

- Original paper: 'Improving fluid intelligence with training on working memory', Jaeggi 2008, n=70.

(2200 citations). - Critiques: Melby-Lervåg 2013, meta-analysis of 23 studies.

Gwern 2012, meta-analysis of 45 studies. - Original effect size: d=0.4 over control, 1-2 days after training

- Replication effect size:

Melby: d=0.19 [0.03, 0.37] nonverbal; d=0.13 [-0.09, 0.34] verbal.

Gwern: d=0.1397 [-0.0292, 0.3085], among studies using active controls.

Maybe some effect on non-Gf skills of the elderly.

A 2020 RCT on 572 first-graders finds an effect (d=0.2 to 0.4), but many of the apparent far-transfer effects come only 6-12 months later, i.e. well past the end of most prior studies.

In general, be highly suspicious of anything that claims a positive permanent effect on adult IQ. Even in children the absolute maximum is 4-15 points for a powerful single intervention (iodine supplementation during pregnancy in deficient populations).

See also the hydrocephaly claim under “Neuroscience”.

Good replication rate elsewhere.

Developmental psychology

- Some evidence for a tiny effect of growth mindset (thinking that skill is improveable) on attainment.

Stats

- Original paper: 'Implicit theories and their role in judgments and reactions: A word from two perspectives', Dweck 1995 introduced the constructs.

(~2200 citations). - Critiques:

Sisk 2018, a pair of meta-analyses on both questions, n=365,915 ;

Folioano 2019, a big study of the intervention in English schools, n=4584.

(~180 total citations) - Original effect size: Hard to pin down, but up to r = 0.54 / d=0.95 in some papers.

- Replication effect size:

Sisk: r = 0.10 [0.08, 0.13] for the (nonexperimental) correlation

Sisk: d = 0.08 [0.02, 0.14]

Folioano: Literally zero, d=0.00 [-0.02; 0.02]

- Mixed evidence for a small marshmallow effect, that ability to delay gratification as a young person predicts educational outcomes at 15 or beyond (Mischel).

Stats

- Original paper: 'Attention in Delay of Gratification', Mischel 1970, n=68, all children of Stanford academics or their friends.

(~ 1000 citations). - Critiques: Watts et al 2018 (n=918), Benjamin & Mischel 2020 (n=113 of the original participants!)

(total citations: ~ 120) - Original effect size: r= [0.42, 0.57] for SAT

- Replication effect size: Watts: r=0.28. But after controlling for the socioeconomic status of the child's family, the Marshmallow effect is r=0.05 or d=0.1, one-tenth of a standard deviation for an additional minute delay, 'nonsignificant' p-values. And since it's usually easier to get SES data...

B&M (2020) note that preschool self-control is not predictive for success at 46. However, the impressive Dunedin longitudinal study (n=1000 over 29 years) is some independent evidence for childhood self-control being persistent on many things, including dropping out of school. (Also some hair-raising results: "24% of the study members had been convicted of a crime by the age of 32 y.")

- “Expertise attained after 10,000 hours practice” (Gladwell). Disowned by the supposed proponents.

- No good evidence that tailoring teaching to students’ preferred learning styles has any effect on objective measures of attainment. There are dozens of these inventories, and really you’d have to look at each. (I won’t.)

Stats

- Original paper: Multiple origins. e.g. the 'Learning style inventory: technical manual' (Kolb), ~4200 citations. The VARK questionnaire (Fleming). But it is ubiquitous in Western educational practice.

- Critiques: Willingham 2015; Pashler 2009; Knoll 2017 (n=54); Husmann 2019

(total citations: ~2400 ) - Original effect size: ???

- Replication effect size: [ ], n=

Personality psychology

Pretty good? One lab’s systematic replications found that effect sizes shrank by 20% though. See the comments for someone with a fundamental critique.

Anything by Hans Eysenck should be considered suspect, but in particular these 26 ‘unsafe’ papers (including the one which says that reading prevents cancer).

Behavioural science

The effect of “nudges” (clever design of defaults) may be exaggerated in general. One big review found average effects were six times smaller than billed. (Not saying there are no big effects.)

Here are a few cautionary pieces on whether, aside from the pure question of reproducibility, behavioural science is ready to steer policy.

- Moving the signature box to the top of forms does not decrease dishonest reporting in the rest of the form.

Marketing

Brian Wansink accidentally admitted gross malpractice; fatal errors were found in 50 of his lab’s papers. These include flashy results about increased portion size massively reducing satiety.

Aaron Charlton maintains a remarkable list here.

Neuroscience

No good evidence that brains contain one mind per hemisphere. The corpus callosotomy studies which purported to show “two consciousnesses” inhabiting the same brain were badly overinterpreted.

Very weak evidence for the existence of high-functioning (IQ ~ 100) hydrocephalic people. The hypothesis begins from extreme prior improbability; the effect of massive volume loss is claimed to be on average positive for cognition; the case studies are often questionable and involve little detailed study of the brains (e.g. 1970 scanners were not capable of the precision claimed).

Stats

- Original paper: No paper; instead a documentary and a profile of the claimant, John Lorber. Also Forsdyke 2015 and the fraudulent de Oliveira 2012

( citations). - Critiques: Hawks 2007; Neuroskeptic 2015; Gwern 2019

(total citations: )

Alex Maier writes in with a cool 2007 case study of a man who got to 44 years old before anyone realised his severe hydrocephaly, through marriage and employment. IQ 75 (i.e. d=-1.7), which is higher than I expected, but still far short of the original claim, d=0.

Readiness potentials seem to be actually causal, not diagnostic. So Libet’s studies also do not show what they purport to. We still don’t have free will (since random circuit noise can tip us when the evidence is weak), but in a different way.

No good evidence for left/right hemisphere dominance correlating with personality differences. No clear hemisphere dominance at all in this study.

Stats

- Original paper: Media speculation?

- Critiques:

(total citations: ) - Original effect size: N/A?

- Replication effect size: [ ], n=

Psychiatry

- At most extremely weak evidence that psychiatric hospitals (of the 1970s) could not detect sane patients in the absence of deception.

Parapsychology

- No good evidence for precognition, undergraduates improving memory test performance by studying after the test. This one is fun because Bem’s statistical methods were “impeccable” in the sense that they were what everyone else was using. He is Patient Zero in the replication crisis, and has done us all a great service. (Heavily reliant on a flat / frequentist prior; evidence of optional stopping; forking paths analysis.)

Stats

- Original paper: 'Feeling the future: Experimental evidence for anomalous retroactive influences on cognition and affect', Bem 2012, 9 experiments, n=1000 or so.

(~1000 citations, but mostly not laudatory). - Critiques: Ritchie 2012, n=150. On one of the nine.

Gelman 2013; Schimmack 2018, methodology.

(total citations: 200) - Original effect size: Various, mean d=0.22. For experiment 9, r= minus 0.10.

- Replication effect size: Correlation between r= minus 0.02

Evolutionary psychology

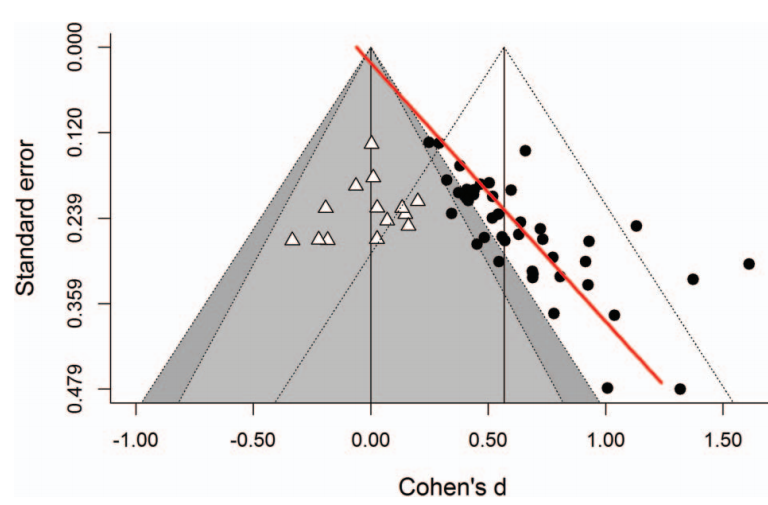

- Weak evidence for romantic priming, that looking at attractive women increases men’s conspicuous consumption, time discount, risk-taking. Weak, despite there being 43 independent confirmatory studies!: one of the strongest publication biases / p-hacking ever found.

Stats

- Original paper: 'Do pretty women inspire men to discount the future?', Wilson and Daly 2003. n=209 (but only n=52 for each cell in the 2x2)

(~560 citations). - Critiques: Shanks et al (2015): show that the 43 previous studies have an unbelievably bad funnel plot. They also run 8 failed replications.

(total citations: ~80)

- Original effect size: d=0.55 [-0.04, 1.13] for the difference between men and women. Meta-analytic d= 0.57 [0.49, 0.65] !

- Replication effect size: 0.00 [-0.12, 0.11]

- Questionable evidence for the menstrual cycle version of the dual-mating-strategy hypothesis (that “heterosexual women show stronger preferences for uncommitted sexual relationships [with more masculine men]… during the high-fertility ovulatory phase of the menstrual cycle, while preferring long-term relationships at other points”). Studies are usually tiny (median n=34, mostly over one cycle). Funnel plot looks ok though.

Stats

- Original paper: 'Menstrual cycle variation in women's preferences for the scent of symmetrical men', Gangestad and Thornhill (1998). (602 citations).

- Critiques: Jones et al (2018)

(total citations: 32) - Original effect size: g = 0.15, SE = 0.04, n=5471 in the meta-analysis. Massive battery of preferences included (...)

- Replication effect size: Not a meta-analysis, just a list of recent well-conducted "null" studies and a plausible alternative explanation.

Note from a professor friend: the idea of a dual-mating hypothesis itself is not in trouble:the specific menstrual cycle research doesn't seem to replicate well. However, to my knowledge the basic pattern of short vs long term relationship goals predicting [women's] masculinity preferences is still robust.

- No good evidence that humans are cognitively or emotionally limited to 150 relationships with other people (Dunbar’s number). Nutrition was a way more important constraint than cognition. The variation is so great that we can’t say anything about the cognitive upper bound. “Dunbar’s method arrives at an estimate of average human group size. But then it is used to indicate maximum human group size”. Anthropology shares the blame.

Stats

- Original paper: Dunbar 1992 (3029 citations).

- Critiques: Lindenfors 2021

(total citations: ). - Original: "100-200", n=38 genuses of primate with mean brain volumes

- Replication: 4–520, n=more

- No good evidence that large parents have more sons (Kanazawa); original analysis makes several errors and reanalysis shows near-zero effect. (Original effect size: 8% more likely.)

Stats

- Original paper: ( citations).

- Critiques:

(total citations: ) - Original effect size: [ ], n=

- Replication effect size: [ ], n=

- At most weak evidence that men’s strength in particular predicts opposition to egalitarianism.

Stats

- Original paper: Petersen et al (194 citations).

- Critiques: Measurement was of arm circumference in students, and effect disappeared when participant age is included.

(total citations: 605) - Original effect size: N/A, battery of F-tests.

- Replication effect size: Gelman: none as in zero.

The same lab later returned with 12 conceptual replications on a couple of measures of (anti-)egalitarianism. They are very focussed on statistical significance instead of effect size. Overall male effect was b = 0.17 and female effect was b = 0.11, with a nonsignificant difference between the two (p = 0.09). (They prefer to emphasise the lab studies over the online studies, which showed a stronger difference.) Interesting that strength or "formidability" has an effect in both genders, whether or not their main claim about gender difference holds up.

Psychophysiology

- At most very weak evidence that sympathetic nervous system activity predicts political ideology in a simple fashion. In particular, subjects’ skin conductance reaction to threatening or disgusting visual prompts - a noisy and questionable measure.

Stats

- Original paper: Oxley et al, n=46 ( citations). p=0.05 on a falsely binarised measure of ideology.

- Critiques: Six replications so far (Knoll et al; 3 from Bakker et al) , five negative as in nonsignificant, one forking ("holds in US but not Denmark")

(total citations: ) - Original effect size: [ ], n=

- Replication effect size: [ ], n=

Behavioural genetics

No good evidence that 5-HTTLPR is strongly linked to depression, insomnia, PTSD, anxiety, and more. See also COMT and APOE for intelligence, BDNF for schizophrenia, 5-HT2a for everything…

Be very suspicious of any such “candidate gene” finding (post-hoc data mining showing large >1% contributions from a single allele). 0/18 replications in candidate genes for depression. 73% of candidates failed to replicate in psychiatry in general. One big journal won’t publish them anymore without several accompanying replications. A huge GWAS, n=1 million: “We find no evidence of enrichment for genes previously hypothesized to relate to risk tolerance.”

[What I propose] is not a reform of significance testing as currently practiced in soft-psych. We are making a more heretical point… We are attacking the whole tradition of null-hypothesis refutation as a way of appraising theories… Most psychology using conventional H_0 refutation in appraising the weak theories of soft psychology… [is] living in a fantasy world of “testing” weak theories by feeble methods.

What now? When the next flashy WEIRD paper out of a world-class university arrives, will we swallow it?

Andrew Gelman and others suggest deflating all single-study effect sizes you encounter in the social sciences, without waiting for the subsequent shrinkage from publication bias, measurement error, data-analytic degrees of freedom, and so on. There is no uniform factor, but it seems sensible to divide novel effect sizes by a number between 2 and 100 (depending on its sample size, method, measurement noise, maybe its p-value if it’s really tiny)…

The melancholy of pareidolia

It's 3500 words, not including the Criticism section. It is rich with talk of moderators, physiological mechanisms, and practical upshots for the layman. And it is quite possible that the whole lot of it is a phantom, a giant mistake. For small effect sizes, we can't tell the difference. Even people quite a bit smarter than us can't.

If I wander around an old bookshop, I can run my fingers over sophisticated theories of ectoplasm, kundalini, past lives, numerology, clairvoyance, alchemy. Some were written by brilliant people who also discovered real things, whose minds worked, damnit.

We are so good at explaining that we can explain things which aren't there. We have made many whole libraries and entire fields without the slightest correspondence to anything. Except our deadly ingenuity.

Selection criteria

I also include cases like growth mindset, where the eventual effect size, though positive, was a tiny fraction of the hyped original claim.

I compare to the original paper's effect size because I am lazy and barely capable of the better alternative, a careful averaging of high-quality supporting papers.

Thousands of bad papers are published every year, and catching them all would be a full-time job even if they were all included in systematic replication or reanalysis projects, ripe fruit. My rule is that if I hear a spurious effect discussed, or see it in a book, or if it could hurt someone, it's noteworthy.

Why trust replications more than originals?

But I don't trust any of them. I look for 3+ failed replications from different labs, just to save me lots of rewriting, as the garden of forking paths and the mystery of the lefty p-curve unfold.

Mandatory errata

- Outliers by Malcolm Gladwell

founded on the 10,000 hours for mastery claim. - Behave by Robert Sapolsky

Himmicanes, power pose, facial feedback, ego depletion, Implicit Association, stereotype threat, broken windows theory, Macbeth effect. - Thinking, Fast and Slow by Daniel Kahneman

Entire chapter on all kinds of priming. Facial-feedback, Effects of Head-Movements on Persuasion, Location as Prime, Money Priming, Death Priming, Lady Macbeth Effect. Cognitive disfluency. Ego depletion. Wansink. Hungry judges. Denies the "hot hand". - Nudge by Thaler and Sunstein

Wansink, Baumeister, Dweck. - Smarter by Dan Hurley.

Dual n-Back and all manner of nonsense nootropics. - Peter Watts is an Angry Sentient Tumor

A sadly muddled defence of Bem

TODO

no bilingualism cognitive advantage

grit

* Heat priming

* Intelligence priming

* Disgust priming

* Honesty priming

* Cleanliness priming

* God priming boosts pro-sociality

* Social distance priming

* Commitment priming reduces forgiveness

* Achievement priming

Positive mood boost helping effect

Superiority-of-unconscious decision-making effect

Behavioral-consequences-of automatic-evaluation

Self-control relies on glucose effect

Physical warmth promotes interpersonal warmth

Power impairs perspective-taking effect

Fertility facial-preferences effect

Font disfluency impairs/improves cognitive performance

https://link.springer.com/article/10.1007/s11409-016-9154-x

Status-legitimacy effect

Red-impairs cognitive performance effect

Reduced pro-sociality of high SES effect

Moral licensing effect

Color on approach/avoidance

Playboy effect

Self-protective subjective temporal distance effect

Trait loneliness hot shower effect

Superstition boosts performance effect

Red-boosts-attractiveness effect

American flag priming boosts Republican support

Unethicality darkens perception of light

Fertility on voting

Modulation of 1/f noise on WIT

Time is money effect

Embodiment of secrets

Warmer-hearts-warmer-room effect

Treating-prejudice-with-imagery effect

Grammar influences perceived intentionality effect

Attachment-warmth embodiment effect

- A counterexample from the COVID-19 pandemic: the UK's March 2020 policy was based on the idea of behavioural fatigue and Western resentment of restrictions; that a costly prohibition would only last a few weeks before the population revolt against it, and so it had to be delayed until the epidemic's peak.

Now, this policy was so politically toxic that we know it had to be based on some domain reasoning, and it is in a way heartening that the government tried to go beyond socially naive epidemiology. But it was strongly criticised by hundreds of other behavioural scientists, who noted that the evidence for these ideas was too weak to base policy on. Here's a catalogue of bad psychological takes. - The polite convention in psychology seems to be to not mention the original effect size.

See also "the link between x and y may be more contingent than previously assumed" or

"we found strong moderators for the association between x and y". - Semantic priming is still solid, but the effect lasts only seconds.

radishGroupMean = 8.35 #minutes

controlGroupMean = 20.86

radishGroupSd = 4.67

controlGroupSd = 7.30

radishGroupN = 22

controlGroupN = 22

cohens_d(radishGroupMean, controlGroupMean, radishGroupSd, controlGroupSd, radishGroupN, controlGroupN, True)incompetence, like anosognosia, not only causes poor performance but also the inability to recognize that one's performance is poor.

- original paper- Ignore the magnitudes, this is made up data.

See also

- A review of 2500 social science papers, showing the lack of correlation between citations and replicability, between journal status and replicability, and the apparent lack of improvement since 2009.

- Discussion on Everything Hertz, Hacker News, Andrew Gelman, some star data thugs comment.

Acknowledgments

Thanks to Andrew Gelman, Stuart Ritchie, Anne Scheel, Daniël Lakens, Gwern Branwen, and Nick Brown for pointers to effectively all of these.

All honour to the hundreds of data thug / methodological terrorist psychologists I’ve cited, who in the last decade began the hard work of cleaning up their field.

Matt VE commented on 28 June 2021 :

Paul M commented on 28 June 2021 :

Oliver C. Schultheiss commented on 28 June 2021 :

Niall commented on 28 June 2021 :

Adam commented on 30 June 2021 :

Kasey Orvidas commented on 18 July 2021 :

Luke Miles commented on 22 July 2021 :

Tom commented on 26 July 2021 :

Max Ra commented on 20 October 2021 :

Alf Stobbs commented on 01 January 2023 :