I was at a corporate team-building event, because I wasn’t persuasive enough to not be. I was a prisoner.

The organisers set up a game: a three-player, unknown-length iterated Prisoner’s dilemma. There was no initial discussion, but free discussion every two rounds. Payoffs were the standard unitless numbers, shifted so that some outcomes were negative. Scores began at zero. No objectives were given.

| All co-op | Some defect | All defect |

|---|---|---|

| All +1 | Defectors +2 Co-op: -1 | All -2 |

I swear I am not making up the roles the players tacitly settled into: perfect archetypes of game theory.

- a Homo economicus (who clearly took Micro 101 and nothing further).1

- an ineffective altruist, trying to get everyone to the global maximum, but without any leverage or provocability. Opened with co-operate, tried again after every negotiation round until the second half when he got in a huff.

- a noise generator. Random action, or, action based on his reading of opponents' body language.

A:

It’s totally straightforward, there’s only one right answer: for Prisoner’s dilemmas, the only Nash equilibrium is ‘always defect’, because it only makes sense to defect in the final round, and the inference to prior rounds is timeless.

Me:

No, that’s for the known-length case with two players! And for when you can assume perfectly rational opponents! And for when their actions are independent of yours, with no communication! And you’re aiming for personal loss minimisation, but you weren’t given a loss function: you don’t know that negative scores are non-fatal (or that they allow for “losing the least”).

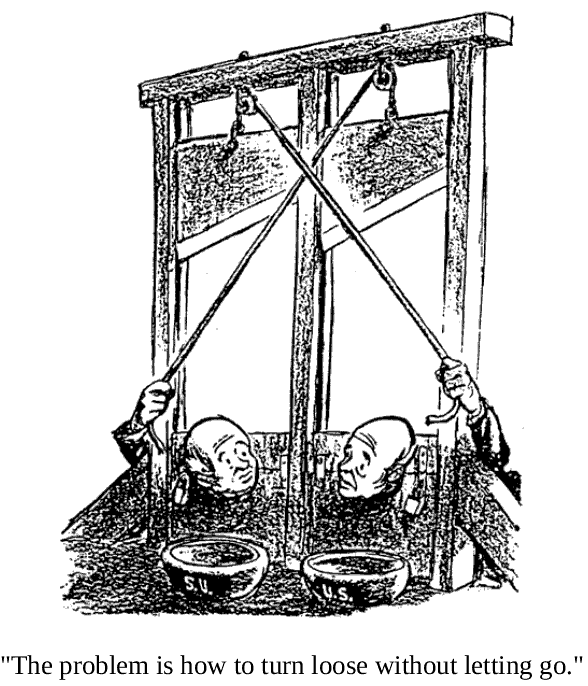

He didn’t listen. Heedless in the grip of theory, the ecstasy of proof, (A) defected every time. During the communication, he was sometimes honest about his strategy and sometimes pretended to accept a truce.

The game ran about 15 rounds, I think ending early in despair. We ended with all scores negative, between 1 and 10 points under. It was announced that everyone had lost, since -1 represented death. Everyone sulked, especially the organisers.

This was in fact an incredible lesson, but not the one the organisers wanted me to learn.

Decisions make no sense without a loss function! Probably the organisers had no idea about loss functions or equilibria or mathematical induction backwards in time, but I could have drawn it out of them. (Scary thought: society depends on statistical inference, and yet some massive majority of those inferences (the null-hypothesis significance tests) are made in total ignorance of their implicit decision theory.

What’s wrong with co-operating every time? Well, setting aside the poor bot’s own (generally terrible) outcome: it invites exploitation, and so can actually be destabilising in a sense, compared to precommitted tit-for-tat.

It is really amazing how stupidly a clever person can act if they are relying on a clever false theory. This can result from any method, any species of reasoning, but using maths badly is the most complete way of disabling such a person. This is why, despite appearances, we have to listen to mouthy gits like Taleb: there really are model error monsters out there.

Even in the absence of A’s model error, the presence of noise would have completely destabilised the equilibrium anyway. We were doubly doomed.

The game wasn’t long enough for the other key ingredient of super-rationality to arise: forgiveness, necessary in all closed-source stochastic domains, like life.

Body language reading could be a real skill, but either way I think most people don’t have enough skill to substitute for outside-view reasoning - even in toy examples like the above.

Anthropics and game theory only work on themselves

When reasoning about what I should do, if I reflect on what my predecessors must have done in order for me to exist, and then generalise this to my descendents (since they are a sort of partial copy of me), I could convince myself that I should do what would maximise my chance of coming into being. (This is all under the assumption that I am the sort of being which should exist, at least equally compared to the counterfactual people in other chains of descent.) But this doesn’t work unless the other people in the chain are also doing anthropics.

Similarly, you don’t benefit from doing game theory on an unpredictable opponent (for instance one who doesn’t know or rejects game theory).

See also

- The Use and Abuse of Formal Models in Political Philosophy, Robert Paul Wolff.

Thanks to Misha Yagudin for the anthropics point.

- Not a real Homo economicus, obviously: instead a Homo sapiens running a bad simulation of one, at the same time shutting down the common sense that might have saved him.

Ashwin commented on 12 August 2022 :